Abstract

The main purpose of this essay is to examine the varying levels of data-driven practices across different fields and explore the future of decision-making based on data. It discusses how companies use data to drive strategies and addresses the challenges and opportunities ahead.

Keywords

Data-driven companies, Business and data, future of decision-making, business intelligence, machine learning, data storages, big data

Table of Contents

Introduction

The rise of data-driven decision-making has transformed the way modern businesses think from the ground. Companies that effectively leverage data are increasingly setting themselves apart from competitors—not just by making informed decisions, but by actively shaping market trends. This essay explores what it means to be a truly data-driven company, highlighting the varying levels of adoption across industries and examining future opportunities or challenges that may cross the journey.

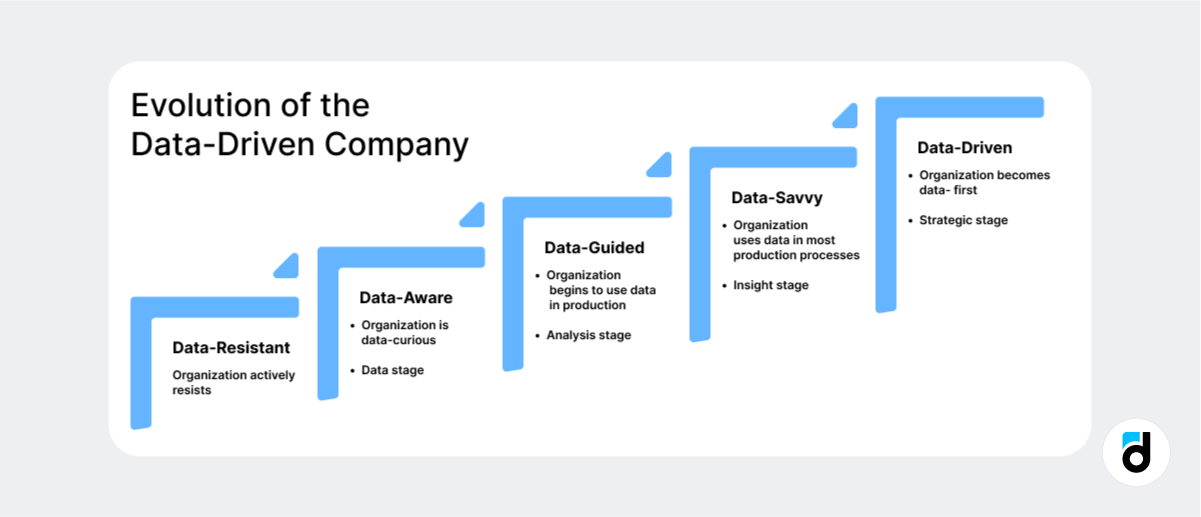

Organizations may begin at a Data-Resistant stage, actively avoiding data-driven approaches. As they become more curious, they move to the Data-Aware stage, followed by Data-Guided, where data starts to influence decisions. As the organization’s data practices mature, they become Data-Savvy, with insights driving key processes, ultimately transforming into a fully Data-Driven company, where data is embedded in strategic decision-making at every level.

From utilizing data to drive strategic initiatives to facing challenges in data security and ethics, the concept of being data-driven is complex and evolving. Studies have shown that data-driven business models can improve competitiveness, productivity, and customer loyalty significantly(An Exploratory Analysis of the Current Status and Potential of Service-Oriented and Data-Driven Business Models within the Sheet Metal Working Sector, n.d.).

Figure 1 Evolution Data driven

(What Is Data-Driven vs Data-Informed, 2023)

1 Defining a Data-Driven Company

A data-driven company is an organization that prioritizes data as a central asset for driving decision-making at all levels—from daily operations to strategic initiatives. Being data-driven means more than just having data; it requires a shift in culture, technology, and operations to actively leverage data in every aspect of business activities. However, achieving this level of maturity is neither straightforward nor attainable for all businesses or sectors. This section explores what it means to be a data-driven company, the stages of development, and the obstacles that make it challenging for some industries to adopt this model.

1.1 Definition of a Data-Driven Company

A data-driven company systematically uses data to inform its business decisions, optimize operations, and understand customer needs. This approach distinguishes itself from traditional models that rely heavily on intuition or past experiences. Data-driven companies are characterized by their data culture, technology infrastructure, data literacy among employees, and an executive commitment to evidence-based decision-making.

Companies such as Netflix and Amazon serve as prime examples of organizations that have integrated data into their core decision-making processes. For instance, Netflix uses extensive data analytics to recommend content, predict trends, and even guide content production, making them a quintessential data-driven business. (van Es, 2023)

1.1.1 More examples of Data-Driven companies

Several companies across different sectors have successfully embraced data-driven decision-making. Here are some notable examples:

- Amazon: Amazon uses customer data to personalize shopping experiences, predict demand, and optimize inventory. Data is used in almost every decision-making process, from supply chain management to product recommendations, making Amazon a leader in data-driven retail.

- Google: Google uses data-driven decision-making not only in its search engine algorithms but also in marketing and user experience optimization. Every decision, from ad placement to software updates, is tested and validated with data to ensure maximum effectiveness.

- Starbucks: Starbucks employs data analytics to understand customer preferences and optimize store locations. The company analyzes purchasing patterns and customer feedback to make informed decisions about product offerings and store design, enhancing the customer experience and profitability.

- Uber: Uber uses data to match riders with drivers efficiently, predict areas of high demand, and optimize pricing through its dynamic pricing model. This real-time data-driven approach helps Uber enhance the user experience while optimizing driver allocation and pricing strategies.

These examples illustrate how companies can successfully leverage data to drive decisions that enhance efficiency, customer satisfaction, and overall competitiveness (Sachdeva, 2023).

1.2 The Path to Becoming Data-Driven: Stages of Evolution

The journey to becoming data-driven is gradual and involves multiple stages of transformation. Not all companies begin as data-driven, nor is this transformation feasible for every organization. The Evolution of a Data-Driven Company illustrates a clear progression that a company may undergo:

- Data-Resistant: The company actively resists data-driven approaches, often due to a preference for traditional methods or a lack of understanding about data’s value.

- Approximately 28% of organizations fall into this category, lacking even the fundamental ability to leverage data effectively (Horton, 2022).

- Data-Aware: The organization becomes curious about data and begins exploring how data can be beneficial, although adoption is limited.

- Roughly 32% of companies are “data-aware,” showing some interest in data, but they often struggle with converting data insights into tangible actions. (Horton, 2022)

- Data-Guided: The organization starts to use data in production processes but mainly as support rather than a primary decision driver.

- 22% of companies are in the data-guided phase, using data to inform decisions but without embedding it deeply into their core business strategies (Horton, 2022).

- Data-Savvy: The organization uses data in most of its production processes, with data analysis informing insights and decision-making.

- About 13% of companies have reached the data-savvy level, where data-driven insights are central to key operational processes. (Horton, 2022)

- Data-Driven: The company becomes fully data-first, embedding data analytics into all strategic decisions.

- Only 3% of organizations have achieved full data maturity, making data an integral part of all strategic initiatives and business models. (Horton, 2022)

While these stages reflect a potential pathway to becoming data-driven, not all businesses can or should aim to reach the final stage. Service-oriented and data-driven models are not widely adopted in all industries, particularly in manufacturing sectors, where companies require significant support for implementation (Horton, 2022).

The journey to becoming data-driven is not instantaneous, as discussed in the introduction. Businesses often progress through varying levels of data maturity. Not every business or industry can realistically achieve the highest level of data integration, and for some, it may not be the most beneficial path. The lack of data maturity continues to be a barrier for many companies, impacting their ability to achieve growth and improve efficiency across multiple domains, including sales, innovation, customer experience, and internal operations (Horton, 2022).

1.3 Challenges of Becoming Data-Driven

1.3.1 Technological Barriers

The shift to being data-driven is heavily reliant on the right technological infrastructure. Implementing modern data storage, analysis, and visualization tools can be costly, and not all companies can handle this burden. Many small and medium-sized enterprises (SMEs), particularly those in traditional sectors like manufacturing, face barriers such as a lack of resources and knowledge to properly implement these changes. In the sheet metal working sector, for instance, many companies still struggle with integrating service-oriented and data-driven business models, as they need awareness, value recognition, and an understanding of the change process, perhaps they don’t even know it could benefit their business.

1.3.2 Cultural Shift and Data Literacy

Another challenge is fostering a data-oriented culture. Becoming data-driven requires a shift in mindset—executives and employees alike must be willing to trust and rely on data over instinct, thus the data analysis and the data source must have 100% certainty that it’s correct. However, creating such a culture takes time and requires substantial investments in data literacy training and change management. This transformation is often difficult for established companies with ingrained habits and norms. As highlighted in the introduction, reaching the data-driven stage often means overcoming substantial cultural barriers that are not easily tackled, especially in established organizations.

1.3.3 Industry-Specific Limitations

It is also important to note that not all industries and sectors are poised to benefit equally from becoming data driven. In industries where data collection is costly or complicated—such as agriculture or parts of healthcare—the transition to a data-driven model may not always be cost-effective. Moreover, the relevance of becoming fully data-driven varies depending on how critical rapid, data-informed decisions are for competitiveness. The healthcare industry, for example, benefits immensely from data-driven approaches in research and diagnostics, but smaller healthcare providers may struggle to implement sophisticated data systems without external support.

1.4 Why Not Every Business Can Be Data-Driven

Becoming a data-driven company is not an automatic or universally advantageous goal for every business. The key to understanding whether a company should strive for data-driven maturity is recognizing the value of data within its specific context. Some industries, such as technology, retail, and finance, have natural advantages due to the large amounts of consumer data they generate and can easily analyze. In contrast, sectors where data availability is low or where operations are deeply human driven may find it less beneficial to pursue this transformation. Additionally, achieving true data-driven operations demands resources and ongoing support, which makes it feasible for only some organizations to undertake this journey.

For instance, the manufacturing industry shows that achieving data maturity is challenging without adequate infrastructure and support. Studies have noted that while data-driven approaches can ensure competitiveness and operational efficiency, companies need structured approaches to overcome obstacles like data literacy and maturity. (Gogineni et al., 2020)

2 Technologies and Tools for Data-Driven Decision-Making (DDDM)

2.1 Data Collection and Storage Technologies

Companies must utilize appropriate data collection and storage technologies to become data-driven effectively. These technologies form the foundation upon which all data-driven activities are built, allowing for data accumulation, management, and use in real-time decision-making and strategic planning. (Oseremi Onesi-Ozigagun et al., 2024)

2.1.1 Data Warehouses and Data Lakes

In modern enterprise data storage, data warehouses and data lakes are two fundamental technologies. (Belov et al., 2021; Hanine et al., 2021)A data warehouse is a central repository used to store structured, filtered, and processed data, often from various parts of the organization. Data warehouses are designed to work well with business intelligence (BI) tools. It must have efficient data collection and Online Analytical Processing (OLAP). At the same time, data analysts can view detailed information in reports or conduct complex analysis operations. The use of ETL (Extract, Transform, Load) processes ensures that the data contained in warehouses is clean, consistent, and ready for analysis.

By contrast, in data lakes raw, unstructured, or semi-structured data is stored exactly as it is created. This means that data lakes can accommodate all kinds of data–log files, social media content, and sensor data–without predefined schemas providing enhanced flexibility. Besides, data lakes are just right for data scientists and analysts who need to take exploratory analyses on raw data or apply machine learning models. Whereas with data warehouses, data lakes use ELT processes. This means data can be ingested more quickly without the need for immediate transformation.

The factors that distinguish data warehouses from data lakes are their reason for existing and the kinds of content they hold. Data warehouses are best suited to structured, historical data employed in operational reports–properly structured. On the other hand, data lakes are better equipped for real-time analysis and working with many different types of data. In practice, most organizations use both kinds of technology side by side and then put them together in a hybrid manner to obtain maximum benefits. This hybrid approach can gain all the advantages of both technologies– using data lakes to provide an agile platform for making quick decisions and data warehouses to provide stability and good performance.

2.1.2 Cloud Storage Solutions

The age of cloud storage is now an integral element in modern data strategies. Cloud solutions offer scalable yet affordable storage. American technology behemoths Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure let every company large and small alike get rid of petabytes of data without being hampered by the physical infrastructure or logistical difficulties that accompany askotic architecture.(Comparing AWS, Azure, GCP | DigitalOcean, 2023)

Cloud storage provides several benefits:

- Scalability is a key advantage of cloud storage. With cloud storage, companies can easily adjust the size of their data repositories, depending on demand. AWS, Azure, and GCP all provide highly flexible storage options that allow companies to quickly scale up their data storage capacities as they grow (Comparing AWS, Azure, GCP | DigitalOcean, 2023).

- Cost Efficiency – All three leading cloud providers offer pay-as-you-go pricing models, so enterprises pay only for the resources that they use, with no risk of over-provisioning or unsold inventory once it’s been sunk into an item of capital expenditure (Comparing AWS, Azure, GCP | DigitalOcean, 2023). For instance, AWS has a pricing model that might help enterprises to optimally expend storage by making costs align with actual usage.

- Security is another critical feature of these platforms is cloud security. Each provider offers advanced features such as encryption, access control, and multi-factor authentication to protect sensitive data from unauthorized access (Comparing AWS, Azure, GCP | DigitalOcean, 2023). These measures are essential for ensuring customer trust and compliance with data privacy regulations in various industries.

- Global Accessibility – With cloud storage, one key feature is to make sure data can be accessed anywhere in the world. Teams will work together effectively wherever they are. The primary cloud providers have multiple data centres around the world so there is always a high degree of availability and reliability in their operations (Comparing AWS, Azure, GCP | DigitalOcean, 2023) Business units also share these data centers–another foundation for teamwork in real-time (Comparing AWS, Azure, GCP | DigitalOcean, 2023)

In addition to stand-alone storage solutions, cloud storage services are perfectly integrated with data processing and machine learning services from the same provider to form a single ecosystem for data-driven operations. For example, AWS S3 works in tandem with the company’s AWS Lambda for serverless data processing and with Amazon SageMaker from AWS for machine learning. Similarly, Google Cloud and Azure provide similar services that simplify data analysis and model training sans reliance upon expensive infrastructure investments, letting businesses glean insights quickly and efficiently (Comparing AWS, Azure, GCP | DigitalOcean, 2023)

These cloud platforms not only deliver the storage solutions an enterprise needs today but also allow for complete management of data from beginning to end-making them an essential part of a data-driven organization.

2.1.3 Big Data Technologies

Nowadays, there is more data generated by more businesses—at varying volumes and velocities—than ever before. To gather and process this ocean of information effectively, powerful big-data technologies are required. Technologies like Apache Hadoop, Apache Spark, and various NoSQL Databases are commonly used for this purpose. These tools enable today’s businesses to handle the complexities of extensive information (Top Big Data Technologies You Must Know in 2024, 2024).

Apache Hadoop is an open-source framework for distributed storage and processing. The output from Hadoop systems is sorted into HDFS (Hadoop Distributed File System) for storage, while MapReduce is used for processing large datasets in parallel. Hadoop is highly fault-tolerant and can manage mountains of structured and unstructured data, making it ideal for firms with large-scale data needs. It is one of the most efficient ways to store and process extensive data for organizations of all sizes (Top Big Data Technologies You Must Know in 2024, 2024).Additionally, Hadoop’s strengths lie in empowering smaller companies to analyze a wide variety of data efficiently, with MapReduce being a key aspect of its effectiveness.

Apache Spark is another open-source data processing system that offers distinct advantages over Hadoop. Unlike Hadoop, Spark can bring data into memory, which shortens processing time significantly, making it suitable for real-time data tasks. Spark’s versatility allows it to handle streaming data, machine learning jobs, and interactive analytics, making it an ideal solution for large-scale, real-time analytics (Top 8 Big Data Platforms and Tools in 2024 | Turing, n.d.).Open-source software like Spark is also highly valuable for scholars and individuals learning about computer science at home who may not have access to a research laboratory.

NoSQL Databases such as MongoDB (a document-oriented database), Cassandra, and HBase are designed to store unstructured or semi-structured data. Unlike traditional relational databases, NoSQL databases offer substantial flexibility, as they do not require a rigid schema to manage large volumes of data. This flexibility is crucial in today’s world of big data applications, which demands both qualitative and quantitative attributes as well as scalability (Top Big Data Technologies You Must Know in 2024, 2024)NoSQL databases are particularly well-suited for managing streaming data, machine learning models, and interactive analytics.

The ability to process and analyze big data in real time is critical for decision-makers. For example, Netflix uses big data tools to analyze user behavior, preferences, and streaming patterns to offer personalized recommendations, which not only enhances user experience but also promotes the retention of its subscribers. (Top 8 Big Data Platforms and Tools in 2024 | Turing, n.d.; Top Big Data Technologies You Must Know in 2024, 2024)

2.1.4 Data Integration Tools

To become a data-driven organization, companies need to integrate data from ubiquitous sources like CRM systems, ERP systems, IoT devices, along with information found on social media platforms. Modified data in a uniform format.

Cut high Overhead like transforming the data ETL (Extract, Transform, Load) tools such as Talend, Informatica and Microsoft SSIS play an important role at this stage. They take data from various sources and put it in a consistent usable form: usually this is called transforming ETL processes ensure that the data is of good quality, uniform and consistently available throughout an organization. It is a solid foundation for analytics. For instance, Talend includes its own extensive data quality checks and powerful transformations, which makes it easier for firms to maintain their strict standards throughout long-term operations. (Prevalova, 2024)

Because ELT Tools (Extract, Load, Transform), such as Fivetran or Stitch, promise set-ups that scale better in modern cloud-based data environments. So instead of just extracting, transforming and finally loading data like ETL tools do, ELT tools hand off raw data straight to the storage system. This strategy works well in the cloud environment, taking advantage of its scalability and processing power. Fivetran and Stitch both simplify integration by connecting different types of data sources into cloud-based data warehouses. This significantly decreases manual workloads and increases flexibility when implementing solutions for enterprise process integration needs (Prevalova, 2024)

Data Virtualization tools like Denodo and Red Hat JBoss Data Virtualization on the other hand introduce a new method of integration that does not couple the data subjects. It provides data virtually in real time without having to physically move it or copy it. This lets complexity, time, and cost of data integration come down dramatically

Data Virtualization enables decision makers to immediately access integrated unique data views, independent of their location from the original data source—providing quick perception for both management and operation decisions (Prevalova, 2024).This real-time ability is particularly important for companies that need to remain agile in a rapidly changing environment.

Semantic Data Dictionaries (SDD), as recent research has pointed out, also make a big contribution in data integration processes. SDDs provide rules for formally representing the semantics of data, which makes harmonization much easier in different data sets. Unlike traditional data dictionaries, SDDs are machine-readable, and their structure supports both explicit and implicit data concept annotations. This in turn lets you automatically integrate data and make it work together regardless of the variety of sources it has come from. For instance, SDDs help integrate data through standardized ontological terms, and maintain a detailed code mapping of categorical values. They make it certain that data from different systems can be understood and used effectively without a great deal of manual transformation work. (Rashid et al., 2020)

Data integration is crucial for organizations wanting to leverage insights from data scattered across different departments and systems. Through proper integration, data from many sources can be efficiently used for analysis, reporting, and decision-making. By using a mix of ETL, ELT, Data Virtualization, and Semantic Data Dictionaries, companies can streamline their data integration processes, eliminate duplication, and create a unified picture of their enterprise-wide information which is essential for making informed decisions.

2.1.5 Streaming Data and Real-Time Processing

Increasingly, companies need to become real-time data processors to survive. This is especially true in finance, retail, and transportation industries where decision speed matters most. Not only will businesses that do not possess this ability be left at a great disadvantage compared to their rivals who can carry out data processing in real-time, but the successful ones are also getting even stronger through more rapid utilization of information. Technologies like Apache Kafka and Amazon Kinesis are widely adopted for streaming data processing because they can deal with sudden onslaughts of information in real-time, enabling businesses to act on their discoveries without delay(ByteByteGo, 2024).

Apache Kafka is a distributed streaming platform that provides to its clients a high throughput, unified, low latency data pipeline handling trillions of messages and petabytes every day. It serves the purpose of bringing information from user applications like driver and rider apps into microservices at Uber, giving a performance that exceeds anything we have seen before in this job (ByteByteGo, 2024). Kafka not only ensures that Uber’s data systems are both batch and real-time, making it ideal for large-scale environments that require fault-tolerant service supported by trickle-feed. At Uber, Kafka even has specific features such as Cluster Federation for improved scaling, Dead Letter Queues (DLQ) to handle message failure in a manageable way, and Cross-Cluster Replication through uReplicator for ensuring data availability on a global level–though not from all regions if one fails. These features mean that Kafka is an indispensable part of Uber’s ability to follow user behaviors and manage shows and business affairs together with consistent, real-time pricing across services.

Amazon Kinesis is an AWS real-time data processing service that, among other applications, allows companies to ingest, process, and analyse streaming data in near time. Kinesis is widely used across industries by AWS’s enormous customer base for analysing streaming data, including monitoring an app’s performance, financial transactions, and customer activity data (ByteByteGo, 2024)For companies using the infrastructure of Amazon Web Services (AWS) Kinesis offers integration without affecting a single change because it is practically built in. All except for the most complex setups should simply want to bring their data into Kinesis; then they’ll be able to immediately identify something interesting in the streaming data and act in response without any additional human intervention required at all.

Streaming data technologies help companies react instantly to market feedback. For example, Uber uses real-time data to modify its dynamic pricing model based on current supply and demand, enabling efficient driver allocation and maximizing profits (ByteByteGo, 2024) . For example, to keep it available Uber uses strategies such as Active-Active Kafka Setup that extends across multiple regions; this ensures if one region fails another will be readily available without interruption. This type of application is critical in industries where swift market changes necessitate immediate action by companies to remain competitive.

2.2 Data Processing and Analysis Tools

To fully develop the potential of data-driven decision-making, any company that deals with data needs effective instruments for processing and analyzing data. Since enterprises use these to turn raw data into actionable insights, today organizations must equip themselves with these tools that can lead business strategy and operation. Below we will review several types of data processing and analysis tools, to explain their usage and significance.

2.2.1 Business Intelligence Platforms

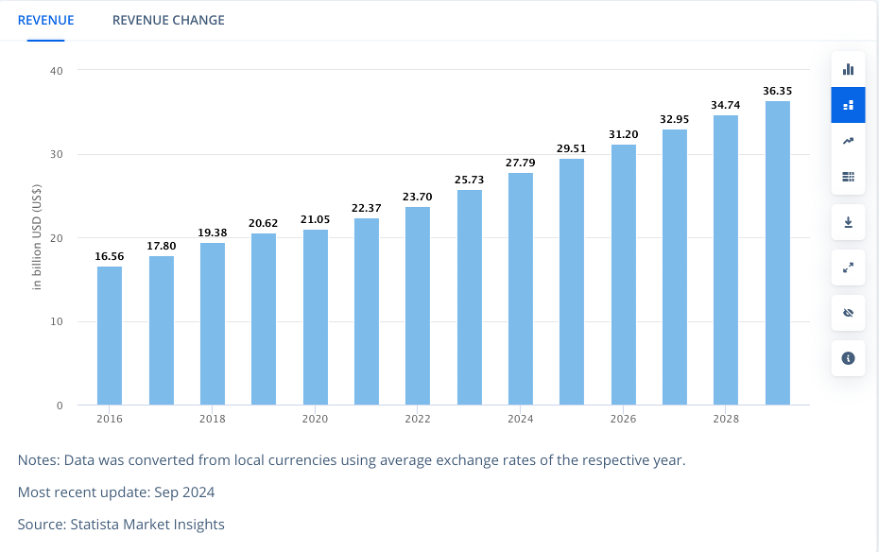

Business intelligence (BI) platforms are becoming increasingly important in a world of massive data growth. The global BI market was valued at $20.6 billion in 2019 and was projected to reach $39.35 billion by 2027, making it a compound annual growth rate (CAGR) of 8.5% ((Lees, 2021) with a prediction from 2019). But according to the most recent data from September 2024 (Figure 2), we can clearly see that the prediction was way too optimistic.

Figure 2 BI Market Size sep 2024, (Business Intelligence Software – Global | Market Forecast, 2024)

These platforms are the merging point where an analyst meets with the manager or the decision-maker; it is where data is connected to models and visualized into a comprehensive form. BI platforms, thus, play a critical role in making data insights accessible and actionable for stakeholders throughout the organization.

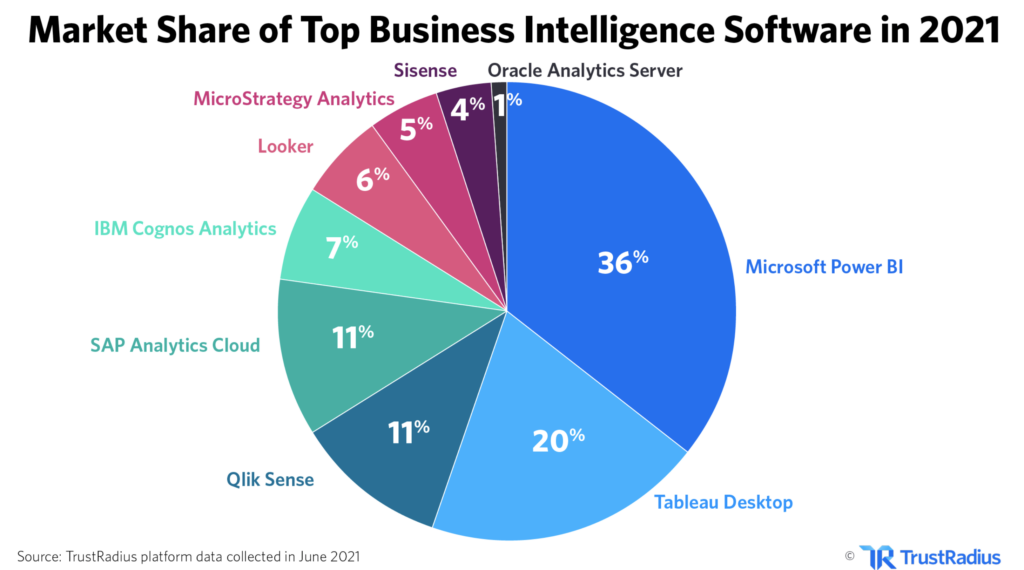

One of the most notable aspects of the current BI landscape is the market share commanded by major players one of those is Power BI from Microsoft. Power BI leads the market with 36% of the share, followed by Tableau, Qlik, and SAP(Figure 3 (Lees, 2021)).

Figure 3 – Market Share of Top BI Software

(Lees, 2021)

Interestingly, the average business uses 3.8 different BI tools, highlighting a fragmented landscape where organizations are still finding the best mix of tools to meet their needs, perhaps also a reflection of the market of data analysts where there is a lack of good and qualified data analysts. 67% of the global workforce has access to BI tools, but only 27% of those executives say their data projects result in actionable insights, raising the question of what’s the point of spending money on expensive BI systems that are no use for that company. This gap highlights ongoing challenges with data literacy and proper implementations. (Lees, 2021)

The Impact of BI Post-COVID

The COVID-19 pandemic significantly influenced the adoption of BI tools. 50% of teams and customers are more likely to use BI tools now than before the pandemic, and 55% of teams are maintaining or increasing their BI spending (Lees, 2021). This increased adoption is largely due to the necessity for data-driven decision-making during uncertain times, especially remote ones. The ability of BI platforms to offer insights quickly and effectively has made them indispensable for organizations looking to remain agile and competitive.

Despite its benefits, BI adoption is not without challenges. Seventy-four percent work with data, and only thirty-seven percent trust their decisions more when those decisions are based on data (TrustRadius, 2021). There is also the issue of data quality, with thirty-four percent of BI companies citing poor data quality as a major issue for their customers. These statistics suggest that although BI is the correct approach, we are still quite far from achieving the ideal world data quality as employees often learn at school.

2.2.2 Machine Learning Frameworks

By automating certain aspects of data analysis, Machine Learning (ML) frameworks help companies build models that predict results and identify trends. ML is a subset of artificial intelligence, allowing systems to learn from data, detect patterns and make decisions without much human intervention. Machine learning is already used in real-life scenarios such as predicting equipment failures before they occur, recommending individualized content to users, and forecasting prices in dynamic markets (What Is Machine Learning?, n.d.)

Some common ML frameworks are:

- TensorFlow: Developed by Google, TensorFlow is an open-source machine learning library that makes it simple to create and train machine-learning models.

- Scikit-Learn: This Python library is especially suitable for beginners because of its simple, easy interface. It provides tools for data mining and data analysis, like classification, regression (linear or logistical), and clustering, even if you’re not familiar enough with the data itself there may be value here.

- PyTorch: Developed by Facebook, PyTorch is a popular pick among researchers because it can be applied efficiently to deep learning tasks and the value of its flexibility makes up for any drawback somewhat in comparison.

Machine Learning Models, MLM can be categorized generally as supervised or unsupervised learning:

- Supervised Learning: This uses labeled data to learn. Common algorithms include:

- Logistic Regression: Used for binary classification problems like spam mailing categorization.

- Support Vector Machines: Creates a hyperplane in n-dimensional space to classify data points.

- Decision Trees: Splits data into branches based on some meaningful criterion to be better able select inputs belonging to different categories.

- Linear Regression: Just as its name indicates, it predicts the value of a variable based on the relationships with input features.

- Random Forest: Combine multiple decision trees to improve accuracy and to overcome overfitting.

- Boosting Algorithms: Using ensemble learning to improve performance by being better than using just a single model to fix errors made by previous ones, e.g., XGboost.

- Unsupervised Learning: These use unlabeled data to learn. A well-known algorithm is:

- k-Means Clustering: Groups similar data points together into clusters based on common characteristics.

(What Are Machine Learning Models?, 2022)

Such models allow companies to extract insights, make decisions in a way that can be automated, and save labor across a variety of different tasks.

Conculsion

This paper has explored what it means to be a data-driven company, highlighting the challenges and tools needed to achieve this transformation. The journey from avoiding data-driven methods to fully embracing them is not straightforward or suitable for everyone.

As discussed, cloud storage, big data frameworks, and machine learning models highlight technology’s critical role in enabling accurate and informed decision-making. However, technology alone is not enough. A data-oriented culture and the knowledge to use data effectively are just as essential. This paper also emphasized the importance of understanding business strategies for real-time data processing and integration. Companies that succeed in these areas are better equipped to respond to fast-changing markets and are more likely to have a growing business.

The future of data-driven businesses is full of potential. New advances in automation, machine learning, and business intelligence platforms are opening doors to exciting possibilities. However, important questions remain: Is it realistic or even beneficial for every sector to aim for a fully data-driven approach? And how can companies already overwhelmed with data transform it into actionable insights? It’s important to recognize that being data-driven may not be equally valuable for every organization. Even so, for those who pursue it, the reward is often greater creativity, innovation, and competitiveness in their fields.

List of references

An Exploratory Analysis of the Current Status and Potential of Service-Oriented and Data-Driven Business Models within the Sheet Metal Working Sector: Insights from Interview-Based Research in Small and Medium-Sized Enterprises – ProQuest. (n.d.). Retrieved November 19, 2024, from https://www.proquest.com/docview/3037599055/5469FEB7BE464226PQ/1?accountid=17203&sourcetype=Scholarly%20Journals

Belov, V., Kosenkov, A. N., & Nikulchev, E. (2021). Experimental Characteristics Study of Data Storage Formats for Data Marts Development within Data Lakes. Applied Sciences, 11(18), Article 18. https://doi.org/10.3390/app11188651

Business Intelligence Software—Global | Market Forecast. (2024, September). Statista. https://www.statista.com/outlook/tmo/software/enterprise-software/business-intelligence-software/worldwide

ByteByteGo. (2024, October 15). How Uber Manages Petabytes of Real-Time Data. https://blog.bytebytego.com/p/how-uber-manages-petabytes-of-real

Comparing AWS, Azure, GCP | DigitalOcean. (2023). https://www.digitalocean.com/resources/articles/comparing-aws-azure-gcp

Gogineni, S., Lindow, K., Nickel, J., & Stark, R. (2020). Applying Contextualization for Data-Driven Transformation in Manufacturing. In B. Lalic, U. Marjanovic, D. Romero, V. Majstorovic, & G. VonCieminski (Eds.), ADVANCES IN PRODUCTION MANAGEMENT SYSTEMS: TOWARDS SMART AND DIGITAL MANUFACTURING, PT II (Vol. 592, pp. 154–161). Springer International Publishing Ag. https://doi.org/10.1007/978-3-030-57997-5_19

Hanine, M., Lachgar, M., Elmahfoudi, S., & Boutkhoum, O. (2021). MDA Approach for Designing and Developing Data Warehouses: A Systematic Review & Proposal. International Journal of Online and Biomedical Engineering (iJOE), 17(10), Article 10. https://doi.org/10.3991/ijoe.v17i10.24667

Horton, C. (2022, December 7). Lack of Data Maturity Thwarting Organizations’ Success. https://www.channelfutures.com/regulation-compliance/lack-of-data-maturity-thwarting-organizations-success

Lees, H. (2021, July 15). 49 Shocking Business Intelligence Statistics for 2021. TrustRadius for Vendors. https://solutions.trustradius.com/vendor-blog/business-intelligence-statistics-and-trends/

Oseremi Onesi-Ozigagun, Yinka James Ololade, Nsisong Louis Eyo-Udo, & Damilola Oluwaseun Ogundipe. (2024). Data-driven decision making: Shaping the future of business efficiency and customer engagement. International Journal of Multidisciplinary Research Updates, 7(2), 019–029. https://doi.org/10.53430/ijmru.2024.7.2.0031

Prevalova, I. (2024, February 8). Top 17 Data Integration Tools in 2024. Adverity. https://www.adverity.com/blog/the-top-data-integration-tools-in-2023

Rashid, S. M., McCusker, J. P., Pinheiro, P., & Bax, M. P. (2020). The Semantic Data Dictionary – An Approach for Describing and Annotating Data [Journal Article]. Ata Intelligence. https://www-proquest-com.zdroje.vse.cz/docview/2890966260/fulltextPDF/F09F4229F2474681PQ/1?accountid=17203&sourcetype=Scholarly%20Journals

Sachdeva, A. (2023, April 18). 5 data-driven decision-making examples. GapScout. https://gapscout.com/blog/5-data-driven-decision-making-examples/

Top 8 Big Data Platforms and Tools in 2024 | Turing. (n.d.). Retrieved November 30, 2024, from https://www.turing.com/resources/best-big-data-platforms

Top Big Data Technologies You Must Know in 2024. (2024, May 29). Simplilearn.Com. https://www.simplilearn.com/big-data-technologies-article

van Es, K. (2023). Netflix & Big Data: The Strategic Ambivalence of an Entertainment Company. TELEVISION & NEW MEDIA, 24(6), 656–672. https://doi.org/10.1177/15274764221125745

What are Machine Learning Models? (2022, January 18). Databricks. https://www.databricks.com/glossary/machine-learning-models

What is Data-Driven vs Data-Informed. (2023, August 17). https://www.devtodev.com/education/articles/en/497/what-is-data-driven-vs-data-informed

What is Machine Learning? Types & Uses. (n.d.). Google Cloud. Retrieved December 2, 2024, from https://cloud.google.com/learn/what-is-machine-learning