Data privacy is the ability of a person to determine who can access their data and is the protection from those who should not have access to those personal data. Generally, it means the right of a person to determine when, how, and what their personal information shares about them publicly. That information might be their name, address, email, location, and others.

Over the years, the importance of data privacy has significantly risen due to the enormous increase in internet usage. All those applications, websites, and other online platforms use the personal data of their users to provide adjusted services. However, not all personal data are stored properly and safely, which might result in data breaches and sequential misuse. Therefore, several protection laws exist to guard people’s right to privacy. Organizations must operate with personal data safely as well as demonstrate to their customers and users that they can be trusted. (Cloudflare, n.d.)

Understanding Data Privacy

Definition of personal data

As the definition states: “Personal data is any information that relates to an identified or identifiable living individual.” Personal data that has been de-identified, encrypted, or pseudonymized but can still be used to identify a person is still considered personal data and is covered by the regulations. However, personal data that has been anonymized in a way that the person cannot be identified is no longer considered personal data. For data to be truly anonymized, it must be impossible to reverse the process and identify the individual. (European Commission, n.d.)

Key Components of data privacy

As a key components are considered specific building blocks of a strong data privacy strategy. They not only help complying with legal requirements but also demonstrate commitment to protecting individuals and their personal information. Below are listed examples of key components and their meanings (WebsitePolicies, n.d.):

- Compliance and auditing – regular updating practices and legal compliance

- Data breach response – having a contingency plan for data breaches

- Data collection and consent – securing explicit user consent before collecting data

- Data use and purpose limitation – using data solely for its intended purpose

- Data minimization – collecting only what is essential

- Data transparency – being upfront about data usage through clear policies

- Data transfer safeguards – complying with international data transfer regulations

- Data subject rights – facilitating user rights to access, correct or delete their data

- Data retention and deletion – defining timeliness for data storage and secure deletion

Importance of data privacy

Challenges users face

All internet users are facing many challenges when protecting their own privacy. There are some of the challenges listed below:

- Losing control of data – because user’s behavior is tracked online on a regular basis, the first challenge that users face is online tracking. Cookies often track a user’s activities, and while most countries require websites to inform users about cookie use, many users may not realize how much of their activity is being recorded.

- Losing control of data – with so many online services, again, people may not realize how their data is shared with others beyond the websites they use, and they often have no control over what happens to their information.

- Lack of transparency – to use web apps, users usually need to give personal details like their name, email, phone number, or location. However, the privacy policies of these apps can be hard to understand.

- Social media – it’s easier than ever to find someone online through social media, and posts can share more personal details than users may know. Overall, social media platforms often collect more data than people realize.

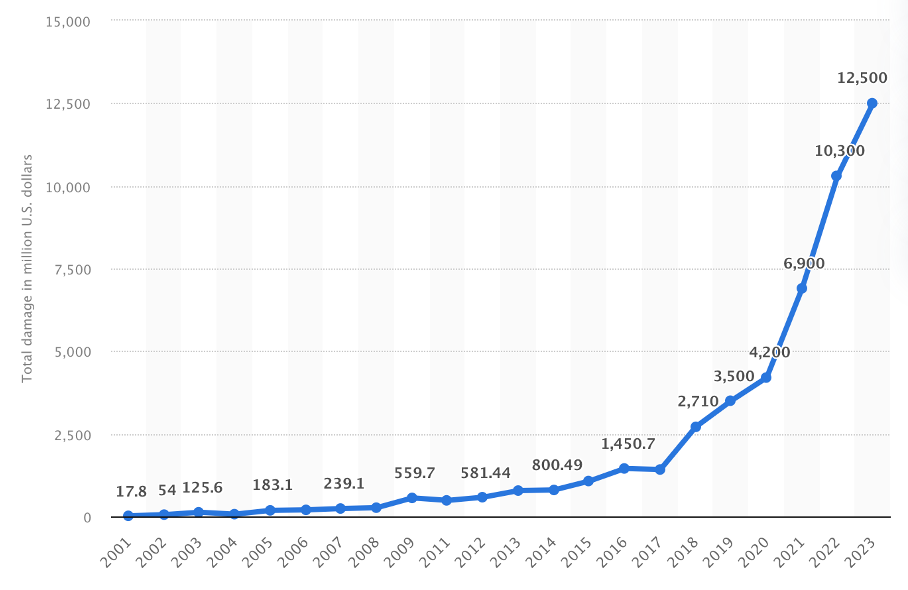

- Cybercrime – many hackers try to steal personal data to commit fraud, break into secure systems, or sell it on the black market. Some attackers use phishing scams to trick people into giving personal information, while others try to hack into companies’ systems that store personal data. (Cloudflare, n.d.). Only the cybercrime cases have risen enormously in past years and cost people huge amount of money. (Figure 1)

These challenges highlight how difficult it has become for users to truly protect their data. Many people don’t know enough about how their data is collected or used, and companies make it worse by hiding it behind confusing privacy policies. Therefore, companies should make their policies easier to read and give people clear choices about their data. For example, users should be able to decide exactly what data to share and with whom. With that is also connected the fact that people need to be more careful about what they share online, especially on social media. Many people don’t realize that posts, pictures, or even likes can give away personal information.

Challenges businesses face

Not only are users facing many challenges when it comes to data protection but also businesses are dealing with them. Only companies that can successfully meet these challenges can get a competitive advantage in the marketplace. Not meeting them might result in failure and an overall negative impact.

The first challenge is communication. Not all organizations are communicating clearly to their users what personal data they are storing and why. This lack of transparency might result in mistrust and potentially legal consequences. Privacy policies are often written in technical or legal terms, which makes them hard for an average user to understand. This fact discourages customers from reading them. Also, not all companies are notifying users properly when new data collection practices are implemented or updated. Users are often unaware of what specific data is shared with third parties, such as advertisers or analytics firms. (Cloudflare, n.d.)

Another challenge is related to cybercrime. Not only individuals are targeted by attackers, but also organizations that collect and store data about those users. Cybercriminals target companies to exploit personal information for financial gain, identity theft, or harmful intent. Cybercrime can lead to severe monetary losses. Companies may lose funds through untrustful transactions, also failing to secure customer data can result in fines under laws. As it is reported by Cybersecurity Ventures, in 2023, global cybercrime costs were projected to exceed $8 trillion. (Cloudflare, n.d.)

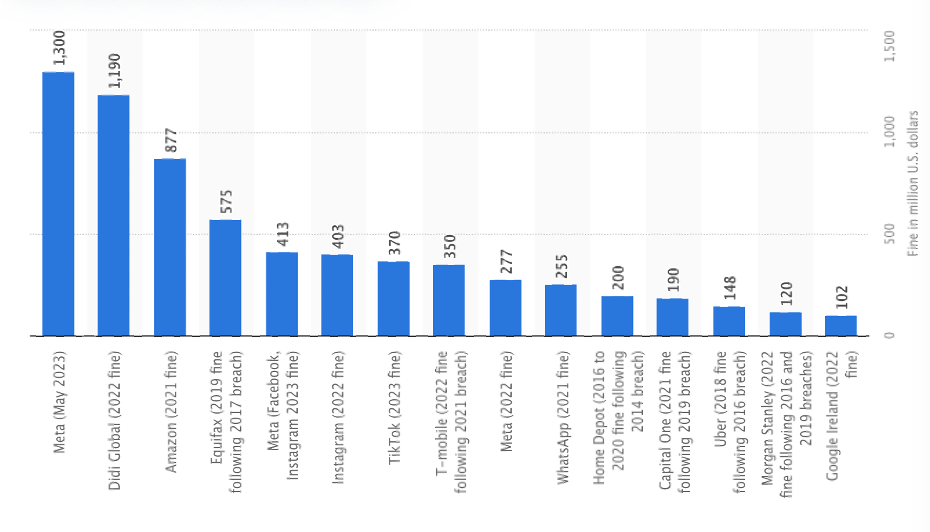

Similarly important are data breaches, which can lead to a massive breach of user privacy. Data breaches are often swapped with the mentioned cyberattacks. However, not all cyberattacks are data breaches because data breaches include only those security breaches, where someone gains unauthorized access to data, resulting in financial, operational, and reputational harm. The number of reported data breaches continues to rise, with millions of records exposed yearly. For instance, the 2021 T-Mobile breach exposed the personal data of over 40 million individuals. Commonly targeted data includes customer names, social security numbers, credit card details, health records, and login credentials. (Cloudflare, n.d.)

Last but not least, challenges arise from insider threats when internal employees might inappropriately access data in case of insufficient protection. These threads may be accidental when employees unintentionally compromise data security by mishandling sensitive information, such as falling victim to phishing attacks or using weak passwords, or intentional when they misuse access to steal, leak, or manipulate sensitive data for personal or financial gain. (Cloudflare, n.d.)

When it comes to cybersecurity, companies should invest more in protecting data. It is not enough to rely on basic security measures with the rising trend in cybercrime. Businesses need advanced systems and regular audits to stay ahead of hackers. They also need to train their employees better so they can avoid accidental mistakes, like clicking on phishing emails. After all it is companies’ responsibility to prioritize user trust above everything else. If users feel their data isn’t safe, they’ll stop using those services. Strong data protection practices aren’t just about avoiding fines or legal issues, they’re also about building long-term relationships with customers.

Protection against misuse

Data misuse means the use of information in a way that it should not be used. Rules for proper data use are usually found in laws, industry guidelines, company policies, and user agreements. Data misuse is often associated with employee data theft, which happens when employees misuse or mishandle information. Unlike data theft, misuse doesn’t always involve sharing information with third parties. However, data misuse can sometimes lead to data breaches.

For example, an employee might copy data onto a flash drive for personal use and then lose it, causing a data leak. Or they might send data to their personal laptop, which could get hacked. There are three most common types of data misuse: for personal gain, carelessness, and commingling. (Syteca, n.d.)

Data misuse for personal gain happens when someone uses sensitive information for their own gain, often harming others in the process. For example, an employee might use one’s company’s trade secrets or customer information to start their own business or sell the data to competitors. This can cause the company to lose money and damage its reputation. (Syteca, n.d.)

Data misuse can also happen due to carelessness, mistakes, or lack of proper training. This might include sharing data with people who shouldn’t have access, accidentally revealing sensitive information, or saving data on unsecured personal devices. Poor data security practices, like not using encryption or improperly setting up cloud storage, can also lead to data breaches. (Syteca, n.d.)

Data commingling happens, for instance, when a company uses personal data collected for one purpose and reuses it for another, often without the person’s consent. For example, a company might collect data for academic research but then share it with a partner for marketing. This misuse of personal data can result in fines and lawsuits. Sometimes, data misuse isn’t noticed for a long time, but it can still cause serious harm to the company and the people involved. (Syteca, n.d.)

Real-world example of data misuse

In April 2023, a young man was arrested by the FBI, who leaked highly classified military documents online, which he was a member of. He had been stealing and sharing these sensitive files for over a year. The files included data about the state of the war in Ukraine, Israel’s Mossad intelligence agency, and China’s interests in Nicaragua. This leak is considered one of the most significant breaches of US national security recently (Statista, n.d.). The young man was sentenced to 25 years in prison for unauthorized removal and retention of classified documents. (Syteca, n.d.)

Regulatory frameworks

A regulatory framework is a system of rules and guidelines designed to make sure organizations follow the law and assess how these rules affect people and industries. Regulatory frameworks are important for keeping industries and sectors organized by setting clear standards that businesses must follow. They also protect consumers and the environment by ensuring that products and services meet quality and safety requirements. (DataGuard, n.d.)

General Data Protection Regulation (GDPR)

The GDPR is a European Union regulation on information privacy in the EU. It is the toughest privacy and security law in the world. The regulation was put into effect on May 25, 2018. The GDPR imposes heavy fines on companies that break its privacy and security rules, with penalties reaching tens of millions of euros. This regulation shows Europe’s strong commitment to protecting data privacy and security, especially as more people use cloud services and data breaches happen regularly. However, the GDPR is broad and not very detailed, which makes it challenging for businesses—especially small and medium-sized ones (SMEs)—to comply with its requirements. (GDPR.eu, n.d.)

Brief history of the GDPR

The right to privacy is part of the 1950 European Convention on Human Rights, which states, “Everyone has the right to respect for his private and family life, his home and his correspondence.” With the progress of technology and the invention of the internet, the EU wanted to implement more modern protection. Therefore, in 1995, it passed the European Data Protection Directive, establishing minimum data privacy and security standards. Then, each member state based its own implementing law.

Two months after an incident when a Google user sued the company for scanning her emails in 2011, Europe’s data protection authority declared the EU needed a comprehensive approach to personal data protection, and that was the beginning of updating the 1995 directive. The GDPR entered into force in 2016 after passing the European Parliament, and on May 25, 2018, all organizations were required to be compliant. (GDPR.eu, n.d.)

Data protection principles

There are seven protection and accountability principles covered by GDPR if processing data (GDPR.eu, n.d.):

- Lawfulness, fairness, and transparency – personal data shall be processed lawfully, fairly, and in a transparent way

- Purpose limitation – data should be collected for clear, specific, and lawful purposes and should not be used in ways that go against those purposes

- Data minimization – means collecting only the data that is necessary, relevant, and adequate for the specific purpose it is being used for

- Accuracy – every step must be taken to ensure that personal data that are inaccurate are erased or rectified without delay

- Storage limitation – personal data should be kept in a way that allows identification of individuals only as long as necessary for the purposes for which it was collected.

- Integrity and confidentiality – mean processing personal data in a way that ensures it is securely protected, including guarding against unauthorized access, unlawful use or accidental loss, destruction, or damage

- Accountability – the controller is responsible for being able to demonstrate GDPR compliance with all these principles

California Consumer Privacy Act (CCPA)

From the North American environment is known the California Consumer Privacy Act of 2018. This act gives people more control over the personal information businesses collect about them. The CCPA rules explain how to follow this law. It gives California residents new privacy rights, including the right to know what personal information a business collects about them and how it is used or shared, the right to delete their personal information, the right to opt out of having their personal information sold or shared or the right to be treated equally. (Office of the Attorney General, n.d.)

Indonesia’s Personal Data Protection Act (PDPA)

Data protection laws in Asia are changing quickly. Countries like China, Thailand, Indonesia, and Sri Lanka have recently introduced detailed privacy laws. For example, Indonesia’s Personal Data Protection Act includes rules about how data is processed, reporting data breaches, and appointing data protection officers. Sri Lanka’s Personal Data Protection Act applies both within the country and internationally, showing a trend in Asia toward broader privacy laws. However, these laws differ a lot in their details and how they are enforced, highlighting the variety of approaches to data privacy in the region. (GDPR Local, n.d.)

Challenges in global regulations

In today’s connected world, data privacy rules are becoming more complicated. As more countries create or update their privacy laws, global businesses face new challenges to follow them. In recent years, the approach to data privacy has changed a lot. The European Union’s General Data Protection Regulation (GDPR) set a high standard, encouraging many countries to create new laws or improve their old ones to match this level of protection. These changes show a global shift toward making consumer privacy and data security a priority. (360 Business Law, n.d.)

Around the world, 120 countries have passed laws to protect personal data and privacy. These laws are designed to keep individuals’ information safe. They usually include rights for people to control their data and rules for organizations to handle it securely and responsibly. Many of these laws are based on principles from major regulations like the EU’s General Data Protection Regulation (GDPR) and the United States’ data protection laws. Key ideas include getting consent, using security measures, and ensuring accountability. (GDPR Local, n.d.)

Differences among the regions

Data protection laws around the world share some similarities, but they also differ a lot, especially in how they are enforced, where they apply, and the rights they give to individuals. For example, the GDPR applies to any business that handles the data of people living in the EU, no matter where the business is located. In contrast, U.S. laws like the California Consumer Privacy Act (CCPA) mainly focus on protecting the data of California residents.

In Asia, countries like China and Indonesia have created strict data protection laws that include special rules, such as requiring companies to report data breaches and appoint data protection officers. India’s Data Protection Law is very focused on consent, with strict rules about how consent must be given and used, making it different from both the GDPR and U.S. standards. (GDPR Local, n.d.)

Strategies for protecting sensitive information

Anonymization and pseudonymization

Pseudonymization and anonymization are highly recommended data masking techniques by the GDPR. Techniques like these reduce risk and assist data processors in fulfilling their data compliance regulations. There is a difference between these two techniques, and their use will depend on the degree of risk and how the data will be processed.

Data anonymization

Data anonymization is the process of removing or changing personal identifiers from data to protect an individual’s privacy. This means details like names, social security numbers, and addresses can be altered or encrypted, so the data can still be used but is no longer linked to a specific person. Even though anonymization removes these identifiers, attackers may still use methods to “de-anonymize” the data. They can compare it with other public sources of information to try and figure out the original identities behind the anonymized data. There are various data anonymization techniques, such as data masking, pseudonymization, or data swapping.

However, data anonymization also has some disadvantages. Under the GDPR, websites are required to obtain user consent before collecting personal information like IP addresses, device IDs, and cookies. While collecting anonymous data and removing identifiers from databases helps protect privacy, it can limit the usefulness of the data for businesses. Anonymized data, for example, cannot be utilized for marketing purposes or to tailor the user experience, as the lack of identifiable information prevents businesses from tracking user behavior or personalizing content. Therefore, organizations must balance privacy concerns with the need for actionable insights from their data. (Imperva, n.d.)

Data pseudonymization

Pseudonymization is a privacy-enhancing technique that replaces identifying fields in a data record with artificial identifiers or pseudonyms. This can involve using a single pseudonym for a group of replaced fields or assigning a unique pseudonym to each field individually. Under the General Data Protection Regulation (GDPR), pseudonymization is defined in Article 3 as “the processing of personal data in such a way that the data can no longer be attributed to a specific data subject without the use of additional information.”

To implement pseudonymization effectively, the additional information that links pseudonyms to original data must be stored separately and safeguarded with technical and organizational measures. This ensures that the data cannot be linked back to an identifiable individual without explicit access to the supplementary information.This approach reduces the risk of exposing sensitive information while maintaining data utility for analysis, making it an essential tool for organizations striving to comply with privacy regulations and protect personal data. (GRC World Forums, 2021)

The critical difference between anonymized and pseudonymized data is whether the data can still be considered personal data. Pseudonymized data can still potentially be linked back to a person, either directly or indirectly, whereas anonymized data cannot be traced back to any individual once it has been processed. Pseudonymization and anonymization work in different ways. Anonymization completely removes any information that could identify someone, making it impossible to re-identify the data later. Pseudonymization, on the other hand, doesn’t completely erase identifiers. It simply makes it harder to connect the data to a specific person, often through methods like encryption. (Imperva, n.d.)

Data masking

Data masking is a method of protecting sensitive information by replacing it with altered values. This can involve creating a duplicate version of a database and applying changes like shuffling characters, substituting words or characters, or using encryption. For example, instead of showing an actual value, you might replace it with symbols like “*” or “x.” Data masking makes it nearly impossible for someone to reverse-engineer or uncover the original data, ensuring the data remains secure even if it is exposed to unauthorized users. The advantage of masking is the ability to identify data without manipulating actual identities. (Imperva, n.d.)

If we compare data masking versus data encryption, data encryption translates data into another form or code so that only people with access to a secret key or password can read it. Whereas data masking is a more widely applicable solution as it enables organizations to maintain the usability of their customer data. By applying masking techniques, personal information is anonymized, which makes it safe for use in areas like support, analytics, testing, or outsourcing, where sensitive details should not be exposed. This method ensures that while the data can still be used for various purposes, the privacy of individuals is maintained by hiding their identifiable information. (GRC World Forums, 2021)

Data swapping

Data swapping, also known as shuffling or permutation, is a technique used to rearrange values in a dataset so that they no longer match the original records. This method involves swapping the values in columns containing identifying information, such as dates of birth, making it harder to link the data to specific individuals. For example, swapping membership type values might have less of an impact on anonymization than swapping more sensitive information like date of birth, which is a direct identifier. This technique helps protect privacy by disrupting the association between data attributes and the original individuals. (Imperva, n.d.)

Data encryption

Data encryption is a security that translates data into a code or cipher-text that is only readable for people with access to a secret key or password. Data encryption works as a protection against stealing, changing, or compromising data. In case of the protection of data, it is necessary that the decryption key is kept secret and protected against unauthorized access. All data can be encrypted, even data at rest or data in transit are not an exception. There are two types of encryptions used today. (Cloudian, n.d.)

Symmetric encryption

Symmetric encryption is the first type. Uses the same key for encryption and decryption. Compared to asymmetric encryption, it is a faster method and is best used by individuals or within closed systems since it is considered less secure. Using symmetric encryption in open systems, such as over a network with multiple users, necessitates the transmission of the encryption key, which increases the risk of unauthorized interception and theft.

The most commonly used type of symmetric encryption is AES – Advanced Encryption Standard, explicitly used for the encryption of electronic data established by the U.S. National Institute of Standards and Technology in 2001. It was developed by two cryptographers, Joan Daemen and Vincent Rijmen. AES is available in many different encryption packages and is the first publicly accessible cipher approved by the U.S. NSA. (Cloudian, n.d.)

AES is based on a design principle known as a substitution-permutation network and is efficient in software as well as in hardware. It is a variant of Rijndael with a fixed block size of 128 bits and a key size of 128, 192, or 256 bits. Block size refers to the length of a fixed-length string of bits, and key size represents the number of bits in a key used by a cryptographic algorithm.(Cloudian, n.d.)

Another type is FPE, Format Preserving Encryption, which is an encryption algorithm that secures data while maintaining its original format, making it especially useful for anonymizing content. For instance, if a customer ID consists of two letters followed by ten digits, the encrypted output will preserve the same structure, replacing the original characters with other valid ones. This ensures that the encrypted data fits seamlessly into the existing system without requiring additional modifications. (Cloudian, n.d.)

Asymmetric encryption

The second type of data encryption is asymmetric encryption. This type uses two different keys for encryption and decryption. It has public and private keys that are mathematically linked and can only be used together. Either key in an asymmetric encryption system can encrypt data, but only the corresponding paired key can decrypt it. This method is ideal for open networks, such as the Internet, and scenarios involving multiple users because the public key can be openly shared without compromising security. Commonly used types of asymmetric encryption include RSA, DSA, and ECC, each offering unique strengths for secure communication and data protection.

DSA, or Digital Signature Algorithm, is a method primarily designed for verifying digital signatures rather than encrypting data. With DSA, the private key owner generates a signature of a given message. This signature can be validated by anyone with access to the corresponding public key, confirming the message’s authenticity and integrity while ensuring it has not been altered during transmission.

RSA is one of the first public-key algorithms that uses one-way asymmetric encryption. Known for its long key length and robust security, RSA is extensively used across the internet. It forms the backbone of various security protocols and enables web browsers to establish secure connections over otherwise insecure networks. ECC, or Elliptic Curve Cryptography, was developed as an improvement upon RSA. Provides better security with a significantly shorter key length. (Cloudian, n.d.)

Weaknesses of encrypted data

However, even encrypted data can be hacked. There are multiple ways attackers can compromise data-encrypted systems:

Accidental exposure is if users inadvertently reveal the key or fail to implement proper security measures. In this case, attackers can exploit the exposed key to access and decrypt sensitive data. Therefore, the decryption key is confidential and must be safeguarded against unauthorized access.

Another way is through malware in endpoint devices. Malware targeting endpoint devices poses a significant threat to encryption mechanisms, such as full disk encryption. Attackers can infiltrate a device using malicious software, gaining access to encryption keys stored on the device. Once compromised, these keys can be exploited to decrypt sensitive data, bypassing the intended security measures and exposing the system to further attacks.

Another one to mention is brute force attack, which occurs when attackers commonly try to break encryption by randomly tying different keys. The effectiveness of encryption is strongly tied to the size of the encryption key, with larger keys providing greater resistance to attacks. This is why many modern encryption standards, such as AES, recommend the use of 256-bit keys to ensure robust security. However, some systems still employ weak ciphers or shorter keys, making them susceptible to brute-force attacks, where attackers systematically try all possible key combinations until the correct one is found.

Despite all these risks, encryption is a strong and effective security measure. It provides a robust line of defense against unauthorized access, ensuring data confidentiality and integrity. However, encryption alone cannot guarantee absolute security, as vulnerabilities such as weak key management, software flaws, or user errors can lead to its compromise. Given these risks, encryption should be viewed as a critical layer within a comprehensive security strategy rather than the sole protective measure. Organizations must complement encryption with additional safeguards. This layered approach minimizes the impact of potential breaches and ensures that sensitive information remains protected even if one defense mechanism fails.

Those organizations who neglect to put into practice data minimization strategies expose themselves to several potential risks. Generally said, the more data a company holds, the more attractive it becomes to hackers and cybercriminals.

Data minimization

Data minimization refers to collecting and storing only the personal information that is absolutely necessary for a specific purpose. Instead of gathering large amounts of data just in case it might be useful later, this approach encourages organizations to be careful and deliberate about the data they handle. Once the data has served its purpose, it should be securely deleted. The goal of data minimization is to reduce risks tied to storing and managing personal information. By keeping only the essential data, organizations can protect both themselves and the people whose information they handle.

Data minimization helps protect people’s personal and health information from being used in ways they didn’t agree to, like targeted ads or sales profiling. These practices can feel invasive, making customers uncomfortable. When companies avoid using data without permission, it shows they act responsibly, which can build trust. This trust often leads to happier customers who are more likely to stay loyal to the brand. (Kiteworks, n.d.)

Key features of data minimization

As mentioned above, a key part of data minimization is collecting only the data that is necessary. This means gathering just the information needed for a specific purpose and nothing extra. For example, if a company is doing market research, it should only collect data related to that research, not unrelated details. Along with this focus on limited data collection, there is also the idea of data retention—keeping data only for as long as it’s needed. For example, a company might store information about its employees only while they are working there. Once an employee leaves, there’s no reason to keep their data. Deleting unnecessary data reduces the risks of storage issues or data breaches.

Data minimization also supports using methods like anonymization or pseudonymization when possible. These techniques change personal data so it can’t be linked to a specific person unless extra information is added separately. For example, a healthcare provider might anonymize patient data for research, making sure it can’t be traced back to any individual, which helps protect their privacy.

Lastly, transparency is an important part of data minimization. It means organizations should be clear and honest about how they collect, use, and handle personal data. For example, they can provide simple, easy-to-read privacy notices or explain people’s rights regarding their data. This helps individuals understand and make informed choices about how their data is used, giving them more control. Being transparent builds trust between organizations and people and ensures companies are responsible for their data practices. (Kiteworks, n.d.)

Ethical considerations

Balancing privacy and innovation

New technologies bring great opportunities but also create concerns about data protection and privacy. To balance innovation and privacy, strong data protection, clear data handling rules, and following regulations are essential. Companies should focus on privacy from the start, use tools like encryption and anonymization, control who can access data, and promote privacy awareness. This helps ensure that innovation respects people’s rights and builds trust in digital systems.

In today’s digital world, there’s this constant struggle between innovation and privacy. Innovation is awesome as it drives progress and makes our lives easier, bringing great advancements in technology, healthcare, and more. But on the other side, it unfortunately often comes with a slight loss of personal privacy. As businesses and governments collect large amounts of data, people worry about data breaches, surveillance, and the misuse of their personal information. Therefore, it is crucial to find a balance between encouraging new ideas and keeping our privacy intact. That means putting data protection measures in place, being open about what is happening, and reassuring people that their data is safe. (TrustCloud, n.d.)

Finding the balance between innovation and privacy is one of the biggest ethical challenges of our time. On one hand, innovation is crucial for progress, better healthcare, smarter technology, and solutions to global problems. On the other hand, losing control of personal data can feel like losing a part of our freedom. Therefore, it is crucial for companies and governments to put as much emphasis on privacy as they do on growth. Finally, privacy and innovation can go hand in hand.

For example, technologies like artificial intelligence or blockchain can improve systems while still protecting personal information. If companies and governments focus on creating ethical frameworks, we can have progress that respects people’s rights. Balancing privacy and innovation is not easy and might be challenging, but it is necessary to create a fair and trustworthy digital future.

Strategies for balancing innovation and privacy

To balance innovation and privacy, organizations can use strategies like building systems that keep privacy in mind, doing detailed privacy impact assessments, being clear about how they handle data, collecting only the necessary information, anonymizing data, raising privacy awareness, and following privacy laws. This all helps to build trust and encourages responsible innovation. Below are listed five strategies to achieve a balance between innovation and privacy:

- Privacy by design – this approach promotes ethical and responsible technology, which ensures that data protection is an important part of the development process.

- Transparency and consent – it is very important to be clear about how data is gathered and to keep users informed. If organizations communicate clearly, people can make smart choices about how their personal information is used, which helps build trust in new technologies.

- Ethical AI practices – Artificial intelligence (AI) systems should follow ethical rules and avoid biased algorithms or unfair practices. Keeping AI fair and accountable makes sure that new technologies meet those ethical standards.

- Data minimization – the principle of collecting only the necessary data for a specific purpose, which might reduce the risk of privacy breaches.

- Robust cybersecurity measures – investing in measures like encryption and regular security audits are crucial for the protection against unauthorized access and data breaches. (TrustCloud, n.d.)

Challenges and future of data privacy

Every day, businesses face more advanced cyber threats as technologies improve. Tools like artificial intelligence are becoming more common, but they also bring new risks. Because of this, companies are focusing on security methods that can adapt to new threats and ensure their data stays safe in the future. The future of data security will likely involve new technologies, updated regulations, and changes in how people use and protect their data. The list below shows some developments that might be expected in the next years:

- Increased use of AI and Machine Learning – these technologies will play a bigger role in improving data security. They help spot patterns, unusual activity, and possible threats in real-time, allowing faster action against security breaches.

- Blockchain technology – is best known for cryptocurrencies, but it also has great potential for data security. Due to its decentralized and unchangeable design, it can offer stronger protection against data manipulating and unauthorized access.

- Biometric authentication – biometric methods like fingerprint scanning, facial recognition, and iris scanning are expected to become more common because they are safer than traditional password systems.

- Supply chain security – keeping supply chains secure will be important to stop attacks on third-party vendors and partners. This involves doing detailed security checks, using merchant risk management plans, and setting clear security rules in contracts.

- Continuous monitoring and incident response – constantly monitoring networks and systems for suspicious activities will remain important for spotting and addressing security threats early. As a result, automated response systems will become more advanced to reduce the impact of breaches. (Alumio, n.d.)

Expected trends for future regulations

In the future, data protection rules are likely to become even stricter. Privacy laws will focus more on sensitive data, such as information about children and health. In the United States, changes to the Children’s Online Privacy Protection Act are being discussed, aiming to increase penalties and responsibilities for mishandling children’s data. New technologies bring both challenges and opportunities for data protection. They make it harder to track how consumer data is used, so strong rules and systems will be needed to make sure everything is done correctly. The growing use of artificial intelligence will also lead to more AI-related laws around the world as governments try to protect consumer rights while still supporting technological progress. (GDPR Local, n.d.)

The role of AI

As technology evolves quickly, AI tools are expected to become a key part of improving data security in the future. Some examples of AI tools:

- Threat detection and prevention – AI-powered threat detection systems can process large amounts of data in real-time to spot patterns and unusual activities that may signal security threats. These tools can recognize known threats using set rules and also detect new or unknown attacks by analyzing behavior, helping organizations stop security breaches before they happen.

- Automated incident response – AI tools can automate how incidents are handled by sending real-time alerts, prioritizing security issues based on their seriousness, and coordinating response actions. These automated systems help organizations respond to security breaches faster, reducing downtime and minimizing the impact.

- User authentication and fraud detection – AI-powered authentication systems improve the process of verifying user identities by analyzing factors like biometric data, behavior patterns, device information, and context. These tools can accurately confirm who the user is and also detect and prevent fraud, such as account takeovers and identity theft. (Alumio, n.d.)

AI has an incredible potential to improve data security, but it also comes with new responsibilities. On the positive side, AI can handle tasks that are impossible for humans, like quickly analyzing huge amounts of data or detecting small threats that traditional systems might miss. However, even AI is not perfect, it can make errors or simply overlook some threads. Companies using AI for security need to regularly check these systems for errors or biases. At the same time, is important for people to stay cautious. AI can make security better, but it’s not a replacement for good practices like using strong passwords or being aware of online risks.

Conclusion

Data privacy is now an important part of human life in the digital age, where there is more personal information stored and shared than in previous times. Technology has advanced rapidly, creating opportunities and threats in the management and protection of personal data. Laws such as the General Data Protection Regulation (GDPR) contest in Europe and the California Consumer Privacy Act (CCPA) in the U.S. are therefore important for setting rules to protect people’s data and require organizations to use it honestly and judiciously.

Therefore, protecting personal data is also a necessity for preventing misuse and gaining trust between users and businesses. The consequences of organizations failing to protect data may range from identity theft and monetary loss to reputational damage. Strategies such as data encryption, anonymization, pseudonymization and minimization are some of the most crucial factors that moderate the risks associated with data breaches and cyberattacks. These serve to ensure fair handling of data by the organization according to legal and ethical standards.

However, there are still challenges to be overcome. Cybercrime will not stop to evolve, and hackers will continue to devise more creative and omnipresent schemes for attacking people and businesses. And unease remains the ever-present ethical balance between innovation and privacy. While speaking about obtaining great benefits from technological advancements, like artificial intelligence, big data analytics, and blockchain, it is also talking about increased monitoring of activities, misuse of data, and loss of personal control. To handle these situations, privacy should be included from the very beginning, security checked regularly, and privacy principles followed right from the start.

Data privacy is more than a technological or legal issue, it refers to human rights in the digital age. Organizations can and should be expected to keep higher standards when it comes to protecting data in the online context.

Literature

Cloudflare. (n.d.). What is data privacy? 2024, https://www.cloudflare.com/learning/privacy/what-is-data-privacy/

European Commission. (n.d.). What is personal data? 2024, https://commission.europa.eu/law/law-topic/data-protection/reform/what-personal-data_en

WebsitePolicies. (n.d.). What is data privacy? Definition, importance, and best practices. 2024, https://www.websitepolicies.com/blog/data-privacy#key-components-of-data-privacy

Syteca. (n.d.). 4 ways to detect and prevent the misuse of data. 2024, https://www.syteca.com/en/blog/4-ways-detect-and-prevent-misuse-data

DataGuard. (n.d.). What is a regulatory framework? 2024, https://www.dataguard.co.uk/blog/what-is-a-regulatory-framework

GDPR.eu. (n.d.). What is GDPR? 2024, https://gdpr.eu/what-is-gdpr

Office of the Attorney General. (n.d.). California Consumer Privacy Act (CCPA). 2024, https://oag.ca.gov/privacy/ccpa

360 Business Law. (n.d.). Global data privacy regulations: Navigating the complex web of compliance. 2024, https://www.360businesslaw.com/blog/global-data-privacy-regulations-navigating-the-complex-web-of-compliance/

GRC World Forums. (2021, July 12). Data masking, anonymisation or pseudonymisation? 2024, https://www.grcworldforums.com/data-management/data-masking-anonymisation-or-pseudonymisation/12.article

Cloudian. (n.d.). Data encryption: The ultimate guide. 2024, https://cloudian.com/guides/data-protection/data-encryption-the-ultimate-guide/

Kiteworks. (n.d.). Data minimization. 2024, https://www.kiteworks.com/risk-compliance-glossary/data-minimization/

Alumio. (n.d.). The future of data security: Predictions and new trends. 2024, https://www.alumio.com/blog/the-future-of-data-security-predictions-and-new-trends

TrustCloud. (n.d.). Data protection in technological advancements: Balancing between innovation and privacy.2024, https://community.trustcloud.ai/docs/grc-launchpad/grc-101/governance/data-protection-in-technological-advancements-balancing-between-innovation-and-privacy/

Statista. (n.d.). Total value of worldwide data breach fines and settlements from 2015 to 2022. https://www.statista.com/statistics/1170520/worldwide-data-breach-fines-settlements/

Statista. (n.d.). Total damage caused by cybercrime in the United States from 2001 to 2022. https://www.statista.com/statistics/267132/total-damage-caused-by-by-cybercrime-in-the-us/